AI will make your company feel slower

Companies are machines for agreement and agreement is expensive

You ask the question the way people ask questions they don’t want answered.

It’s after your third meeting of the day. You have a small change in mind. Not a rewrite. Not a new feature. A tweak. A button label. A default. A line of copy. You did the responsible thing: you wrote it down, made it clear, included screenshots, and tried to be specific enough that nobody could hide behind “unclear requirements” blocked “definition of ready”.

And still, someone says, “Cool. We can probably get this into next sprint.”

Words that sound normal until you say them out loud.

You don’t argue. You do the polite nod. But inside, something shifts, because you now something now that you didn’t know a year ago. You’ve watched a machine turn a rough thought into a first draft in minutes. You’ve seen it outline a plan, summarize a thread, draft the email you’ve been avoiding, and generate a plausible first pass of code. Now you know its far more capable than a first draft. So your brain does the math.

If we can do this in an afternoon, what exactly are we waiting for?

That’s the question you don’t want to ask in the meeting, because the answer isn’t flattering. The answer isn’t “we’re busy”.

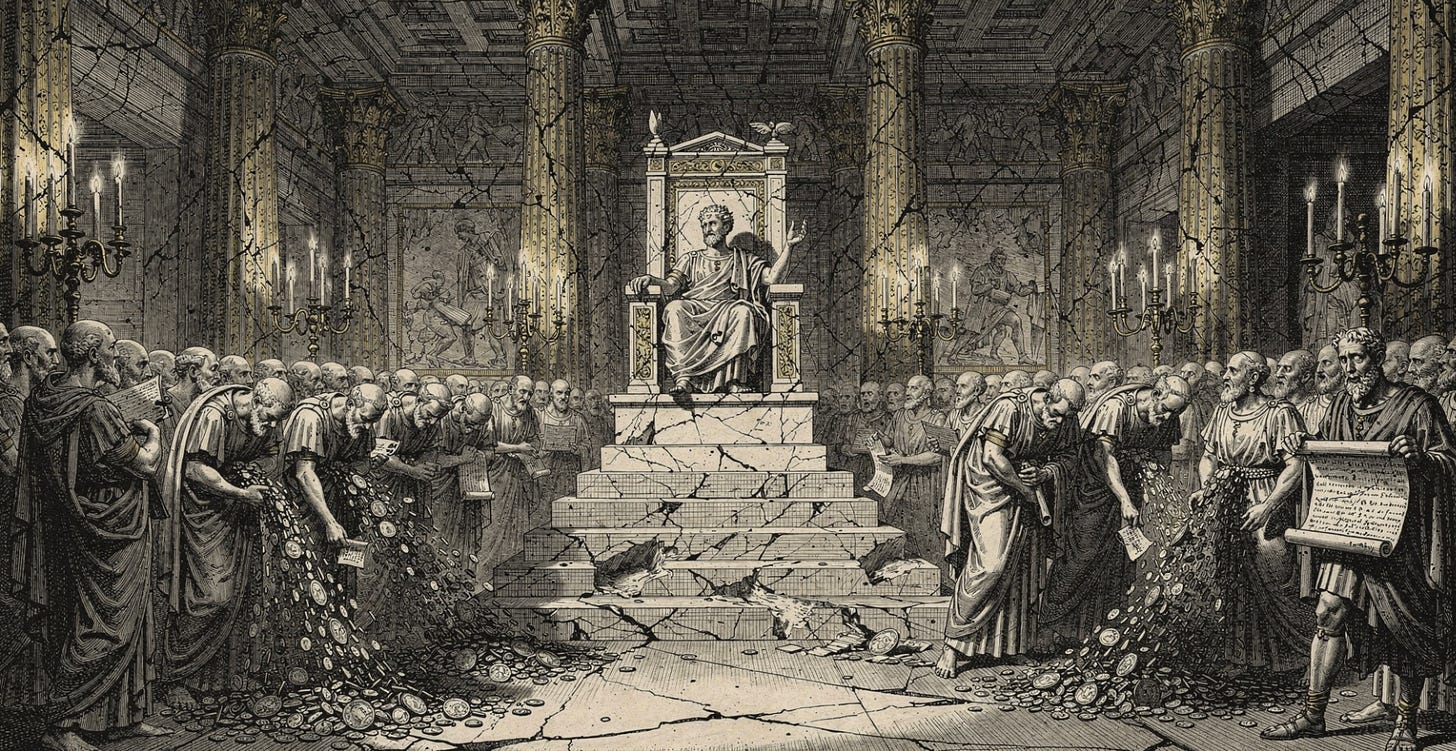

The answer is that the company is slow because the company is a machine for agreement, and agreement is expensive.

On a small team, you can change the button because everyone who matters is basically in the same room, even if the room is Slack. People share the same picture of reality. They know what the product is. They know why the button exists. They know what breaks if you touch it. They trust each other’s judgment. So the change is simple:

decide, do, ship.

Then the company grows and the picture stops fitting in one head.

Now the same change needs a chain of “yes.” You need design, product, engineering, analytics, maybe legal, maybe brand. None of those functions are ridiculous. The ridiculous part is the hidden work you’ve created: you are no longer changing a button, you are trying to synchronize five different mental worlds.

This is why AI will make companies feel slower. Not because AI makes nothing faster. It makes the individual parts fast enough that the organization’s real speed limit becomes visible.

For years, companies could blame latency on execution. Engineering takes time. Writing takes time. Research takes time. Analysis takes time. Drafts take time. AI collapses a lot of that early work. It compresses “starting”. It compresses “first pass”. It compresses “show me options.”

You can see it in how real people are already working. A common pattern is separating planning from execution: a human decides what matters and what constraints exist, a coding agent does the first pass of the labor; then the human iterates with judgment. One clean example of that mindset is expressed nicely in this recent Claude Code workflow write-up, which frames the split explicitly as “planning” vs “execution”

The Workflow in One Sentence

Read deeply, write a plan, annotate the plan until it’s right, then let Claude execute the whole thing without stopping, checking types along the way.

That’s it. No magic prompts, no elaborate system instructions, no clever hacks. Just a disciplined pipeline that separates thinking from typing. The research prevents Claude from making ignorant changes. The plan prevents it from making wrong changes. The annotation cycle injects my judgement. And the implementation command lets it run without interruption once every decision has been made.

You can also see it in the broader push to compress cycle times: getting from intent to a concrete artifact quickly, then spending human energy on the last mile. I wrote about this earlier as “compressed cycle times” and why they’re so powerful when you actually experience the new baseline.

The world’s intelligence is not selfish. It created lower things for the sake of higher ones, and attuned the higher ones to one another. Look how it subordinates, how it connects, how it assigns each thing what each deserves, and brings the better things into alignment. — V. 30

So why doesn’t the whole company speed up?

When execution gets cheap, what’s left is the hard part we don’t like to talk about. The company’s speed limit becomes specification clarity, shared context, decision rights, and risk ownership.

In other words: shared reality.

This is the part that confuses everyone right now. People are living in different climates. If you spend your day inside a tight loop where you can draft and iterate quickly, the company’s delays stop looking like “the cost of work” and start looking like “the cost of being organized.” You begin to feel like the organization is wasting your life.

But there’s a reason the organization insists on going slow, and it’s not always stupidity. At scale, the most expensive thing isn’t building. It’s recovering.

If a bad change goes out in a small team, you can often undo it quickly because the same people who made the decision feel the pain and can reverse it. In a large organization, the blast radius grows. A small change can create a support storm, a security incident, a legal headache, a brand embarrassment, or a reliability outage. So large organizations build governance systems that are designed to ship not just product, but non-events: no scandals, no breaches, no outages, no lawsuits. That need for legibility creates latency.

Even if you accept that, you still feel the frustration. Why does it take *this* long? Why does it feel like nobody can say yes?

This is where incentives enter

In large organizations, speed is politically expensive. Fast decisions have fewer witnesses. Fewer witnesses means fewer shields. If it goes wrong, the blame has a clean address. Slow decisions spread responsibility. The “yes” gets diluted into a room. The failure becomes a “process failure,” not a person.

Most people aren’t playing this game because they’re evil. They’re playing it because they like paying rent.

That’s also why the most confusing phrase in modern work is “high agency.” We use it like it’s a personality trait. But in many companies, “agency” means “you have enough power and cover to take risk without getting punished.”

AI doesn’t eliminate the need for alignment but it will amplify the cost of misalignment.

If tools can generate output faster than teams can agree on what they want, you get more motion with less direction. You get more drafts with less clarity. You get more code with less understanding. You get more decisions made on vibes because the pace makes it hard to stop and think. This tweet recently captured this succinctly:

This is why I think the best teams in the next few years won’t be the ones with the most AI. They’ll be the ones with the strongest shared mind.

A “shared mind” isn’t a nice-to-have. It’s the only way to keep speed from becoming chaos. Teams don’t just share a repo. They share a living model of the system, the domain, and each other. When that model is strong, you can move quickly with fewer meetings that end in disagreement. When it’s weak, every change becomes a negotiation. I wrote about this as shared understanding is the product and why it matters more than code.

So what should we reasonably expect?

We should expect a strange phase where individuals feel superhuman and

organizations feel stuck. People will say, “I can do this in an afternoon: why can’t we ship it?” Leaders will respond with some version of “we need to be careful,” which will sound like cowardice. Both will be partly right.

We should also expect companies to respond with more rules, not fewer. When output gets cheap, verification becomes expensive. So organizations will build more approval gates, more policy, more auditing, more provenance. You can see early hints of these borders forming when platforms start restricting automation and agentic usage (even for paying customers) because the system can’t tell what’s

safe, what’s abuse, and who is responsible1.

If you want companies to actually get faster, the solution isn’t “tell everyone to use AI.” It’s to lower the cost of agreement.

That means reducing coordination surface area per unit output. It means thin interfaces, clear ownership, explicit constraints, and a culture that rewards learning instead of blame avoidance. It means building fast rollback so speed isn’t recklessness. It means treating context like capital.

AI will not automatically make companies faster.

It will make them honest about what was always slow.

One concrete discussion

thread along these lines:https://discuss.ai.google.dev/t/account-restricted-without-warning-google-ai-ultra-oauth-via-openclaw/122778